Background

Experimentally driven research on “Future Networks” in Europe began over 40 years ago. It was the result of the “technological gap” discussion that emerged in Europe in the mid 1960s, shifting America’s leading role for utilizing technology into the limelight. By March 1965, the EEC had decided to address this problem on a European scale by setting up a working group within the medium-term Economic Policy Committee. Even the name of this group changed several times, it is best known as PREST Group. Its mission was “to examine the problems involved in developing a co-ordinated policy for scientific and technological research, bearing in mind the possibility of co-operations with non-member countries” – a brief that still sounds familiar. [Source: A brief History of European Union Research Policy, European Commission, Studies 5, October 1995]

This initiative was accompanied by a series of studies performed by the OECD and officially published 1968 under the title “Gaps in Technology”. It included both analyses of the inherent nature of the technological gap and those on its economic, social and political causes and effects. The French author and journalist Jean-Jacques Servan–Schreiber, who influenced the discussions at the time with his bestseller “Le défi américain” (“The American Challenge”, 1967), couldn't resist but comment the OECD findings with the prediction that in “fifteen years from now the world’s third greatest industrial power, just after the United States and Russia, may not be Europe, but American industry in Europe”.

The most surprising conclusion of the OECD authors, however, was: “Europe primarily had a management rather than a scientific problem to solve.” [“Gaps In Technology]

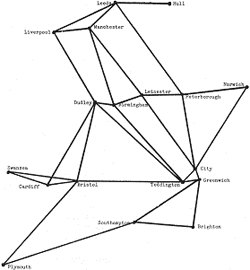

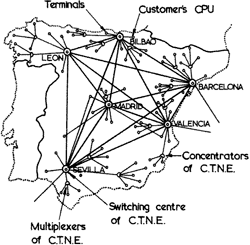

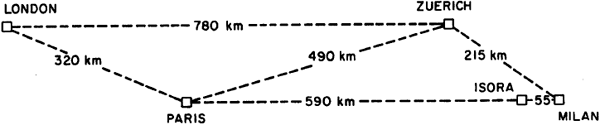

As a result of that debate COST [Cooperation Europeenne dans la Domaine de la Recherche Scientifique et Technique] was created 1969 and a treaty signed two years later on the November 23 to build a pan-European Informatics Network [UN treaty No20750]. “COST Project 11 – A European Informatics Network (EIN)” was set up as a European international research project to study informatics network techniques, with the purpose:

- to facilitate the exchange of ideas between the computer centres which it links;

- to provide a forum for the discussion and comparison of schemes now being proposed for the exchange of information between computers;

- to provide a potential model for future networks, whether for commercial or other purposes

Similar to the development of the Arpanet in the USA, only the younger generation of scientists displayed interest to join this research project. Apart from British scientists from the National Physical Laboratory and French Cyclades team, non of the European researcher had build a network before. Some of them had learned about the ARPANET while studying time-sharing systems in the USA. Pietro Schicker for instance, who became responsible for the Swiss node of the “European Informatics Network” in the early 1970s has found time to read the proceedings of AFIPS (American Federation for Information Processing Societies) about the ARPANET while being on a ship back home to Europe.

‘Social’ ties and individualistic interests turned out to be highly influential for the successful deployment of the EIN in 1977. Yet the impact of these efforts for Internet research in Europe was disappointing. The European Industry remained in the wings, and instead of coordinating their research, the remaining players founded divergent projects following different national interests. Derek Barber, technical director of the research project, wrote 1973 about the European situation: “Many of you will know that the intention is to build a European packet-switching data network that (dare I say it) won’t cost a packet!”

Making a difference: The European OSI paradigm

In contrast to earlier experimental driven research, OSI’s “European” paradigm in the 1980s was to specify complex systems architectures top down. Consequently, European inventions such as datagrams were ignored by X25 and later also by the ISO-OSI standardisation bodies, while being implemented in TCP/IP – as American invention. The French networking Pioneer Louis Pouzin tried his utmost to influence the standardisation decision process in Geneva during the 1970s. Well, he didn’t have a chance.

When comparing the historic situation to that of the current Future Internet work program, we see that collaboration to further standardisation is still strongly encouraged. However, while standardization is important, there are underlying question that need clarification beforehand: What are appropriate forms, processes and for whom when trying to standardize aspects of Future Networks?

Networking 2020

It seems we are now somehow back at the beginning again. European, American and Asian scientists, politicians and entrepreneurs are competing once again. We are currently witnessing a proliferation of ambitious proposals for a “fresh generation” of networks from various regions of the world. In the US, conceptual work for GENI, a “continental-scale, programmable, heterogeneous, networked” system driving a “clean slate” paradigm, was launched in 2004, only to be superseded - at least partly - by the FIND initiative 2006 and the NetSE counsil 2008. Japan has rolled out a “New” as well as a “Next Generation” networking program, the latter was launched in spring 2008. China has established its CNGI and several EU-Member states are currently heading off into their own national Future Internet initiatives, which are complemented by EC FP7 projects and the Digital Agenda.

In parallel, large-scale experimental research facilities are set up at national and international level. Examples are PlanetLAB in the USA or its European equivalent OneLab, hammering out protocols and paradigms. The “clean slate” approach that guides e.g. the efforts of GENI as well as those of the Japanese “new generation networking” project, once aimed at “getting rid of IP”. 2011 the “new networking thinking” covers OpenFlow, delay and disruption tolerant networks, virtualisation of nearly everything as well as the hypothesis that time is right to build a network architecture, which follows the publish/subscribe paradigm (Information Centric Internet).